It appears Valve has been developing a standalone XR headset, codenamed ‘Deckard’, for some time. Now, an industry insider has apparently gotten a peek at the headset’s design, calling it “quite amazing,” further noting it’s potentially arriving sometime next year.

Stan Larroque, Founder of XR hardware company Lynx, confirmed in a recent X post the he’s actually seen the design for Valve’s next XR headset.

The design of Valve next HMD is quite amazing!

— Stan Larroque (@stanlarroque) May 17, 2025

Larroque further confirmed that neither him nor his company Lynx, which released the Lynx R-1 mixed reality headset, is under any type of non-disclosure agreement (NDA).

Larroque tells Road to VR that Valve Deckard won’t compete against Lynx’s upcoming hardware, as they separately “address two different markets [and] price points.”

Still, beating around the bush somewhat, Larroque tells us Valve and Lynx “might share suppliers for some components,” which definitely smells like a supply chain leak.

“I would be equally pissed if Lynx nextgen ID got leaked so I won’t share more,” Larroque says in an X post. “I’m just excited for good new XR HMDs. The HMD-making world is so small, we all share the same suppliers for some components.”

Furthermore, he tells Road to VR that he’s heard that mass production and eventual availability is slated for 2026, which differs slightly from a previous report wherein leaker and data miner ‘Gabe Follower’ alleged Deckard would arrive by the end of 2025, priced at $1,200.

While Valve hasn’t confirmed anything yet, the rumor mill has been drumming up its fair share of speculation even since the Deckard naming scheme was discovered by data miners in January 2021.

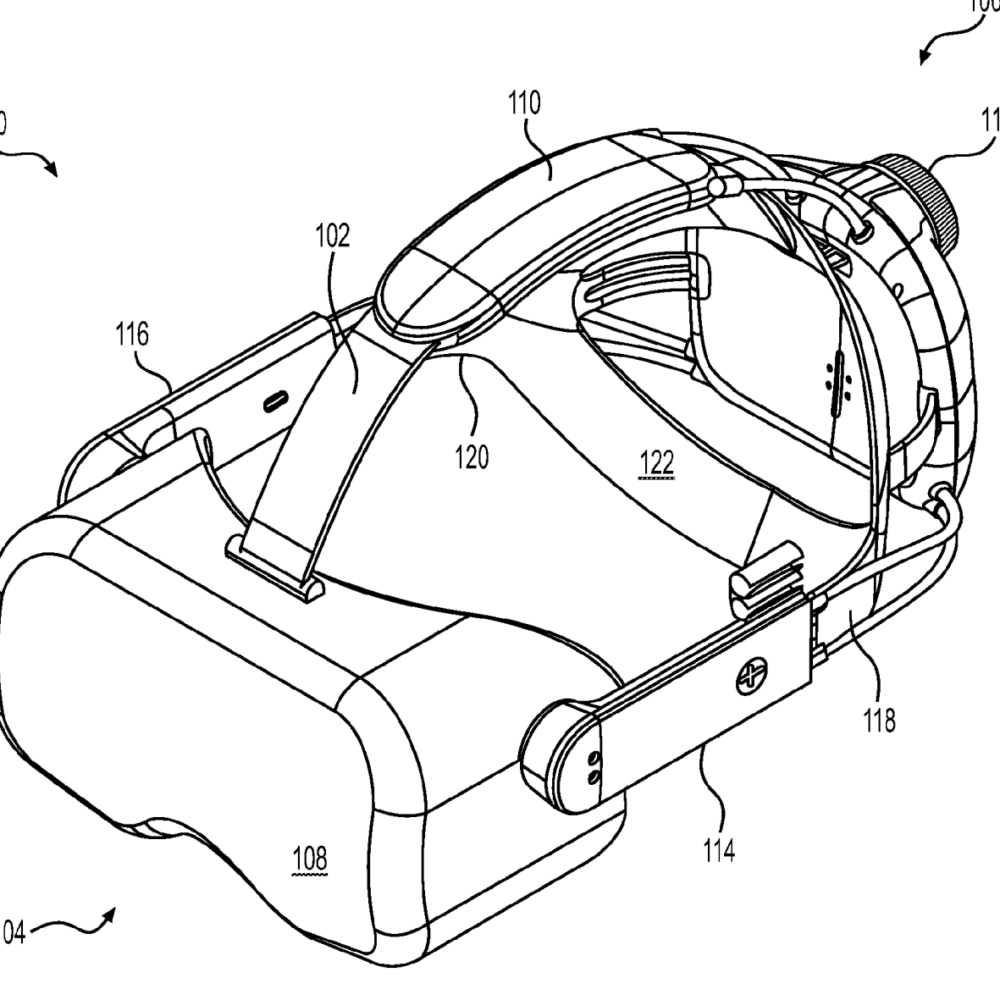

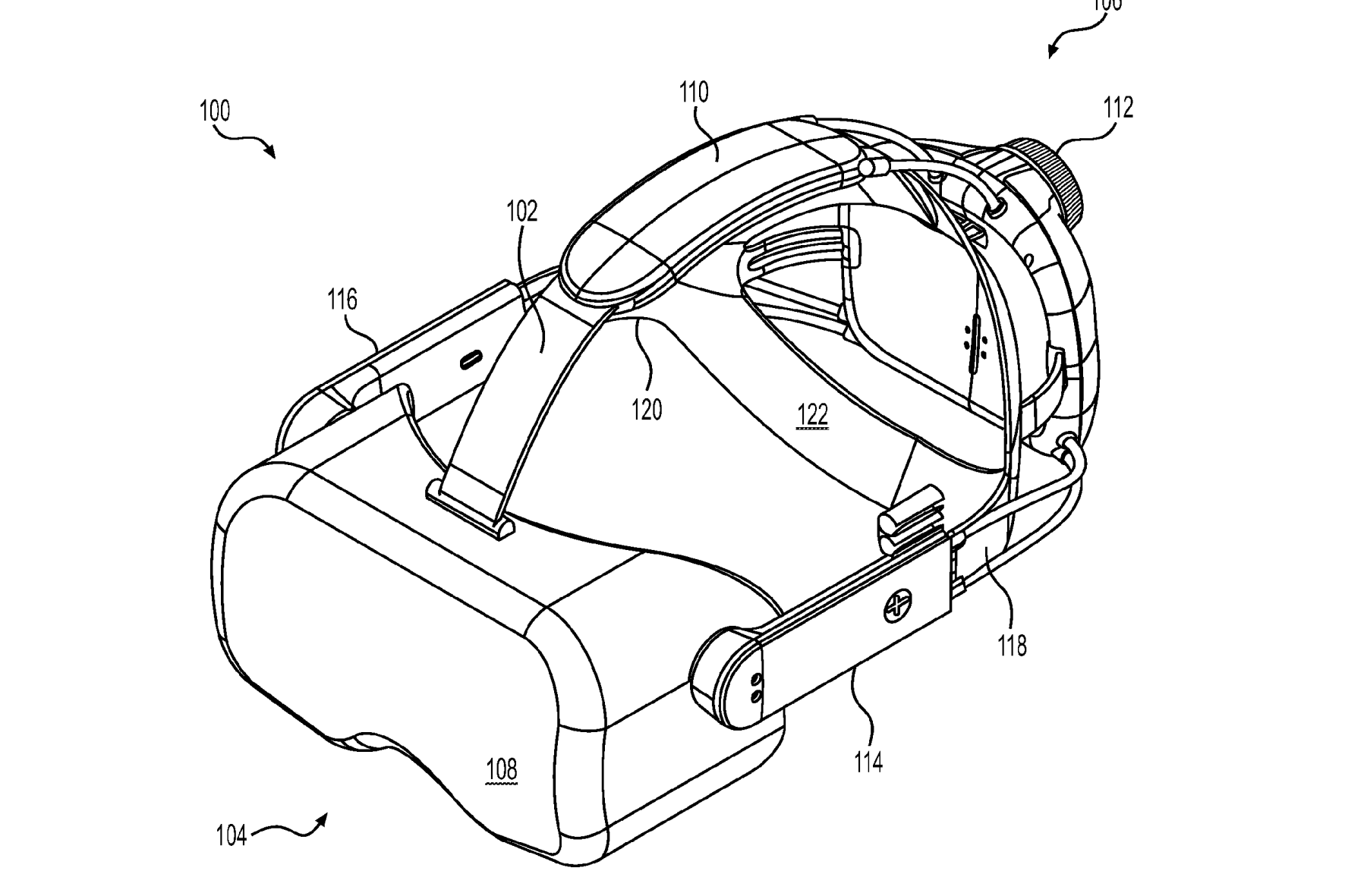

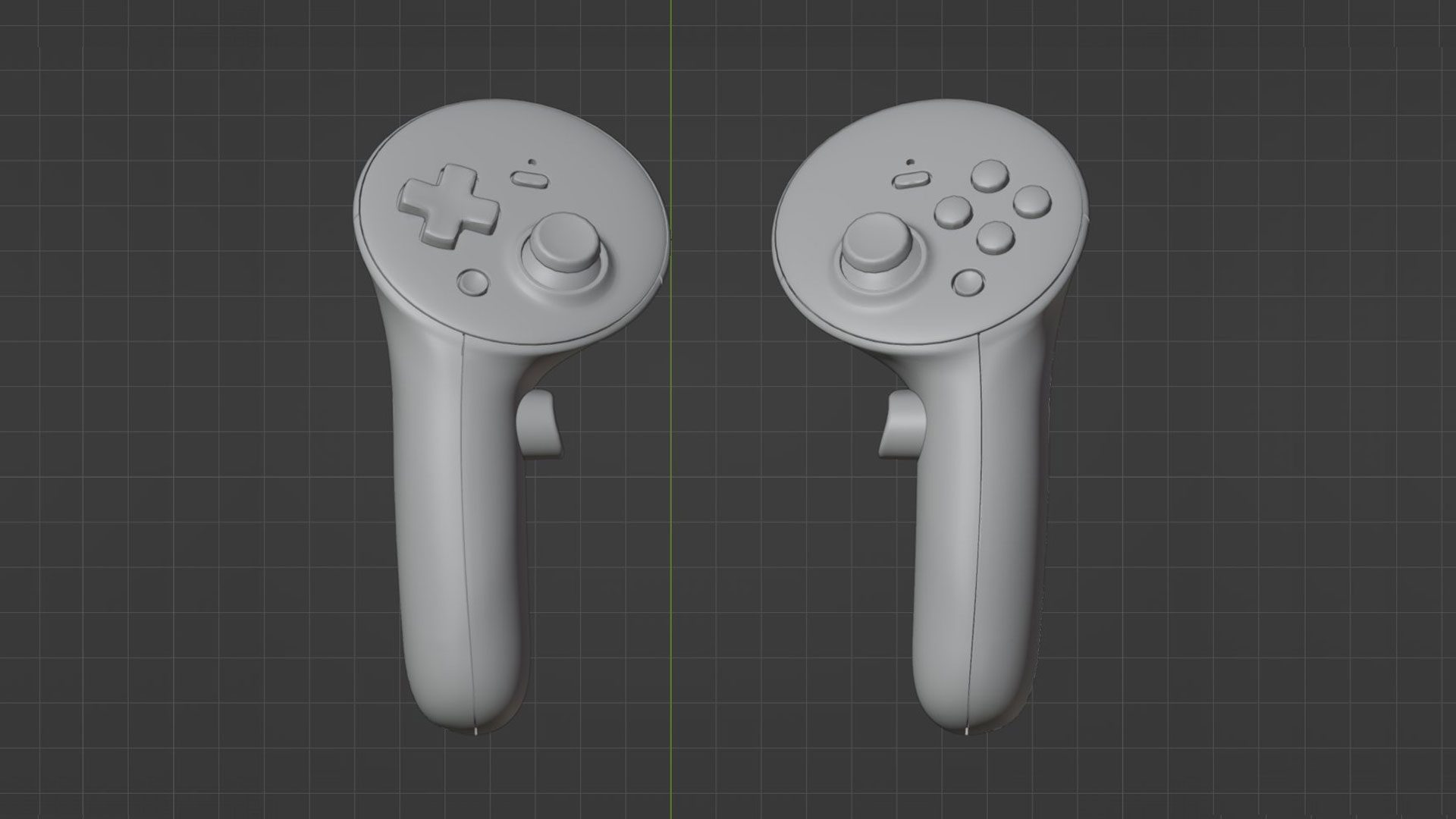

There have been leaked prototype designs (seen above) from 2022, as well as leaked 3D models hidden in a SteamVR update late last year (seen below), which appeared to show off a new VR motion controller, codenamed ‘Roy’.

Then, last month, tech analyst and VR pundit Brad ‘SadlyItsBradley‘ Lynch reported Valve was gearing up production for the long-awaited device, evidenced by Valve’s recent importation of equipment to manufacture VR headset facial interfaces inside the USA.

Lynch alleges the equipment in question “is being provided by Teleray Group who also manufactured the gaskets for the Valve Index and HP G2 Omnicept.”

Exactly what and when are still relatively big question marks, although it appears Valve is moving forward with its standalone XR headset at an opportune time. Provided Larroque’s supply chain leaks are true, and it is indeed coming in 2026, a number of previous reports suggest there will be some healthy competition out there when it does.

In July 2024, The Information alleged Meta is planning to release two flagship consumer headsets sometime in 2026, codenamed ‘Pismo Low’ and ‘Pismo High’. Beyond that, a competitor to Apple Vision Pro, tentatively thought of as ‘Quest Pro 2’, is reported to arrive in 2027. Meanwhile, we’re waiting for any real shred of evidence to come from Apple of any forthcoming headset.

By then, Samsung’s Project Moohan should be in the wild, which when it launched in late 2025 will run Google’s upcoming Android XR operating system. The device is slated to bring the full-fat Android App Store to an XR device for the first time in addition to XR content.

While we’d expect Valve to skip the flashy keynotes and simply seed developers first with hardware in its usual lowkey manner, you never know when a random purchase link might just pop up on Steam, so we’ll be keeping our eyes peeled from now until whenever.