Samsung is reportedly working on a pair of smart glasses that could be more advanced than its forthcoming competitors to Ray-Ban Meta.

Android Authority maintains it’s found evidence of a third pair of smart glasses in the source code of Samsung’s upcoming One UI 9 firmware, revealing a new model number: ‘SM-O500’, code named ‘Haean’.

Notably, two model numbers are already known: ‘SM-O200P’ and ‘SM-O200J’, code named ‘Jinju’, which are likely to be associated with the Android XR-based smart glasses Samsung recently confirmed will release sometime this year.

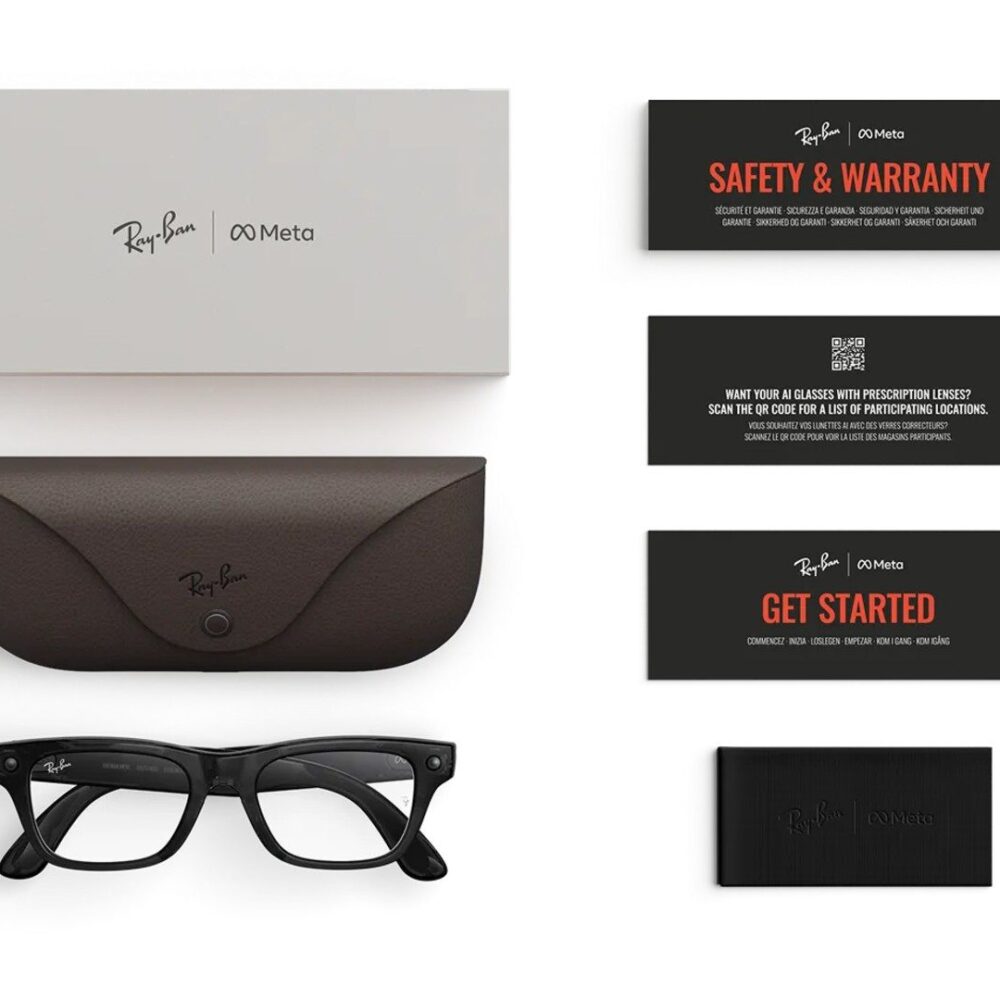

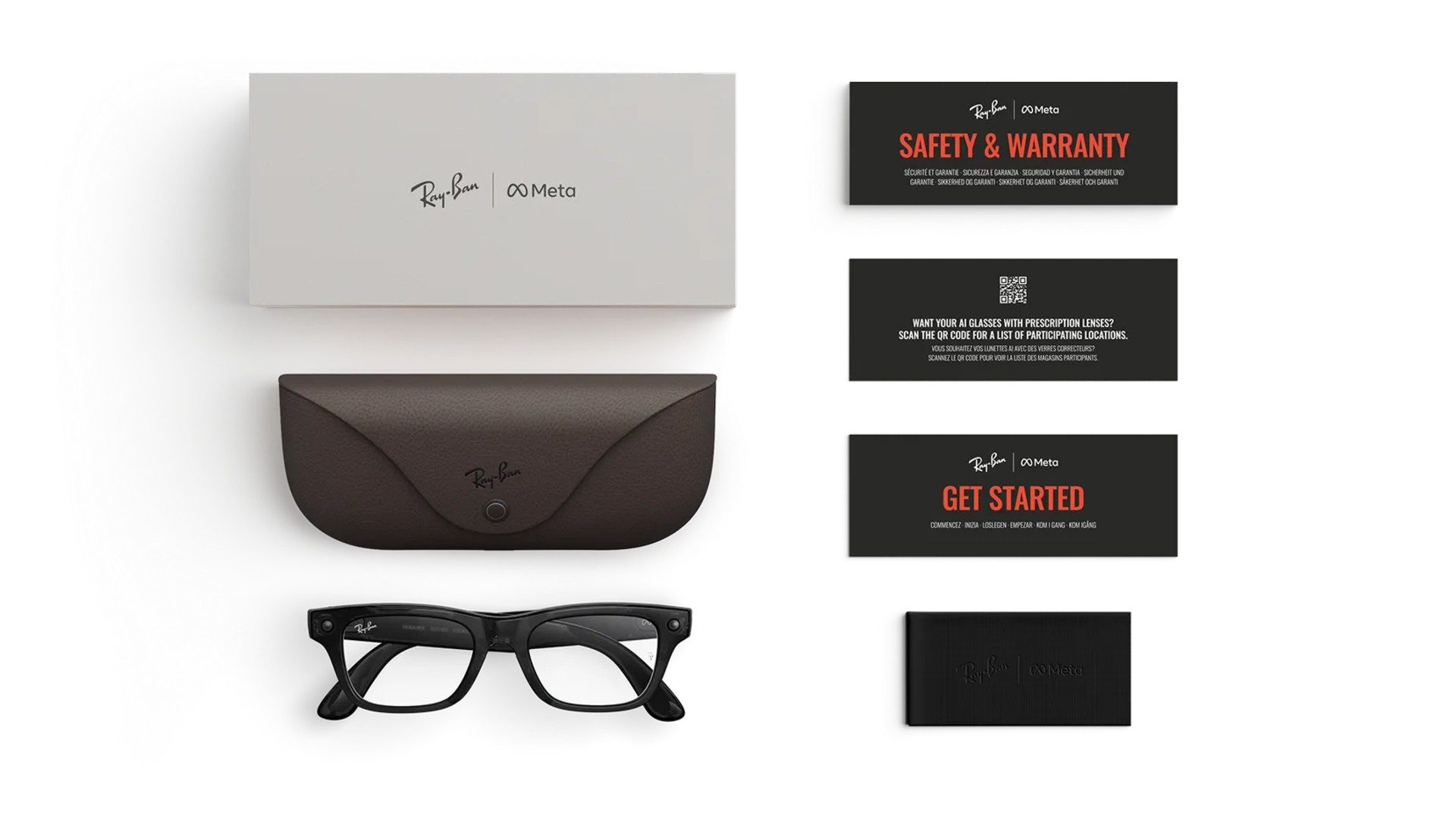

Those are expected to be similar to Ray-Ban Meta, in that they’ll essentially be ‘audio-only’, including microphones, camera, speakers, but no form of display.

As SM-O500 follows the same numbering and lettering scheme as those two known smart glasses models, it could indicate Samsung is already working on ecosystem support for the ostensible next-gen device.

Based off prior rumors, SamMobile further suggests it may even be a display-clad version coming in 2027, similar to Meta Ray-Ban Display ($800) released late last year in the US.

Granted, as Android Authority notes, its source code sweep of One UI 9 isn’t a smoking gun. APK teardowns of the sort can be useful in revealing future releases, but may also not make it to a public release.

What we do know thus far: Google, the creator of Android XR, announced last year it was partnering with Samsung as well as Gentle Monster and US-based eyewear brand Warby Parker to release the company’s first generation of Android XR-based smart glasses.

Google also hopes to release a model with built-in displays for visual output. The company showed off two prototypes last year, including both a monocular and stereoscopic model to demonstrate Android XR’s ability to adapt to multiple hardware configurations.

Still, there’s no release date in sight for any of the Android XR-running smart glasses. The inclusion of ecosystem tie-ins in One UI 9 (based on Android 17) could mean we’ll find out more soon, however. Android 17 is expected to release in June 2026, with One UI 9 expected a month later, which could hold more clues.