After multiple delays, Pimax has finally begun shipping its next PC VR headset, albeit in “small batches,” which arrive with a fabric headstrap—something of a temporary solution until the company can ship out its official headstrap.

The News

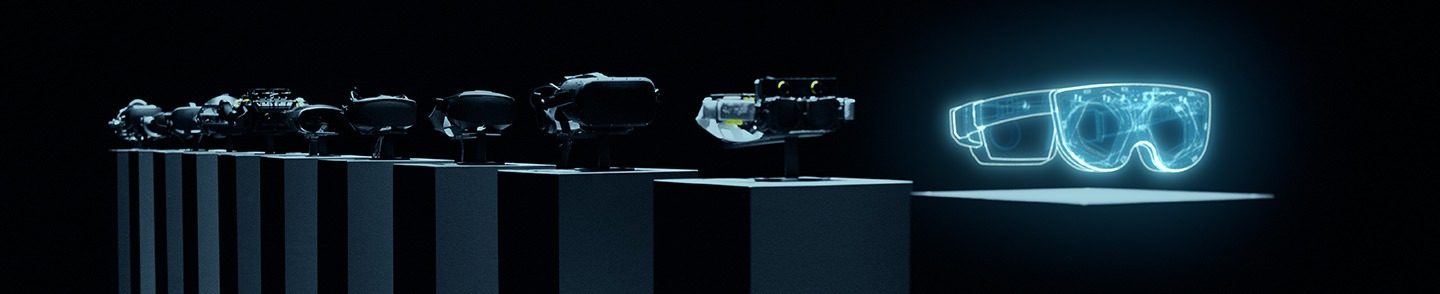

Dream Air is Pimax’s first thing and light PC VR headset, which is set to arrive with Sony’s high-end micro-OLED panels, packing in a 13.6MP (3,840 × 3,552) per-eye resolution.

Now, Pimax told Road to VR that it actually began shipping Dream Air in “small batches” before the end of the year for the purposes of external beta testing.

While official shipments are set to kick off sometime this month, a few users have already received Dream Air with what Jaap Grolleman, Pimax’s Head of Communications, describes as a stopgap measure to get the first units out the door.

“We’re still working on the final backstrap, but we don’t want to make that a showstopper to start shipping and start collecting feedback on the headset,” Grolleman said in a recent video.

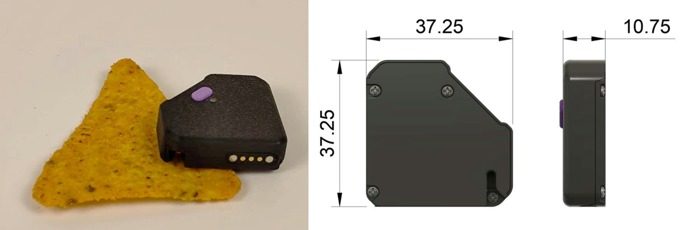

Those early batches of Pimax Dream Air are shipping with what the company calls its “2D headstrap”, as it’s made out of fabric, with Grolleman noting that it’s “perfectly fine to use, even in long sessions as it hugs your head from behind and slightly above.”

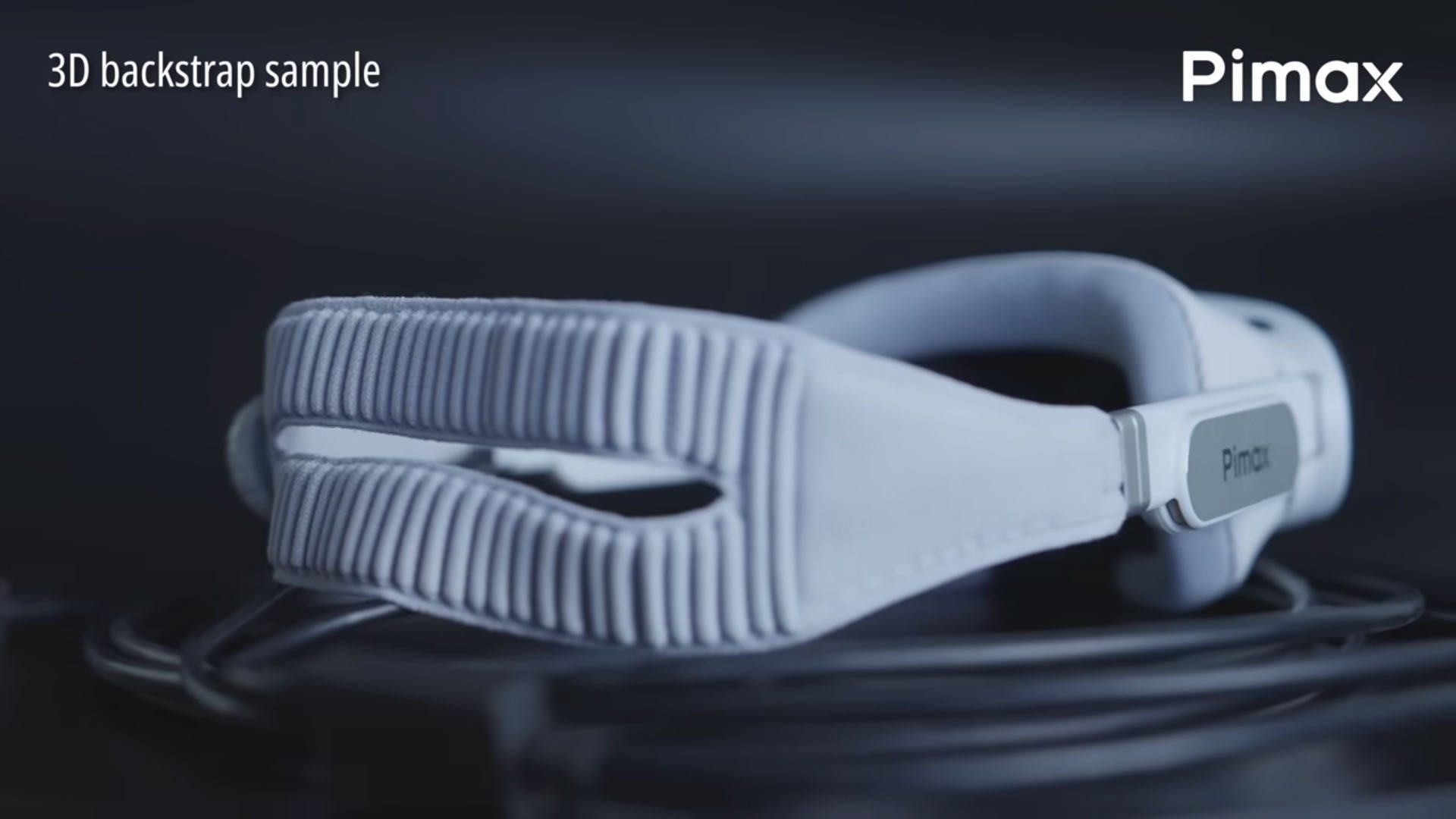

A “3D headstrap”—more of an Apple Vision Pro-inspired knit affair—is said to arrive later to who initially received the 2D strap with their order.

Pimax hasn’t provided info on when the 3D strap will arrive, or when the company will cut off shipments including the 2D strap.

Notably, Pimax says it’s also developing a “hard backstrap,” which includes off-ear audio, which will be available sometime after Dream Air begins its wider rollout.

As for Dream Air SE—the cheaper variant which uses 6.5MP (2,560 × 2,560) per-eye displays—Pimax says small batches will begin shipping out in February 2026.

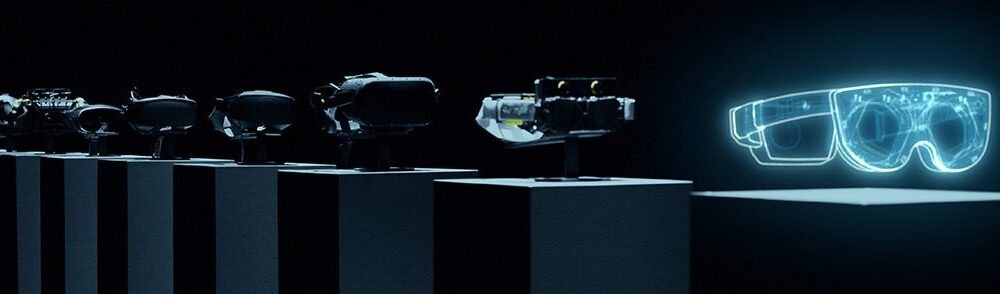

Pimax initially announced Dream Air last December, as it hoped to enter the emergent thin and light PC VR headset segment, which includes entries such as Bigscreen Beyond and Shiftall MaganeX Superlight 8K. The headset however suffered a number of delays following its planned May 2025 launch.

My Take

If you’ve been following Pimax, you already know this is how they operate: official announcements and initial shipping dates feel more like walking into a brainstorming session, as the company often changes designs, specs, and release windows multiple times before official release. Along the way, the company usually tends to announce other devices, making the reporting process more like taking apart a watch to see what time it is.

On the face of it, you might think that’s fairly amateurish behavior, but Pimax has proven to do what few companies can: publicly iterate with the expectation that it will eventually deliver.

It’s been that way ever since the company funded its original 2017 Pimax “4K” headset via Kickstarter—back when Pimax announced it was releasing the first consumer-oriented wide-FOV PC VR headset alongside a bevy of modular accessories. Some of those never came, and some arrived two years later.

Okay, maybe that was amateurish, but the company is still here, and still serving up competitive hardware, which says something.