Palmer Luckey, founder of defense startup Anduril, revealed more capabilities of its EagleEye XR glasses, this time showing off its wide field-of-view (FOV) night vision.

Anduril revealed EagleEye late last year, showing off an impressive (if not outright terrifying) set of augmented reality capabilities the company hopes to eventually serve up to U.S. soldiers. Luckey, who also founded Oculus in 2013, has now showed off a little more of the system’s night vision.

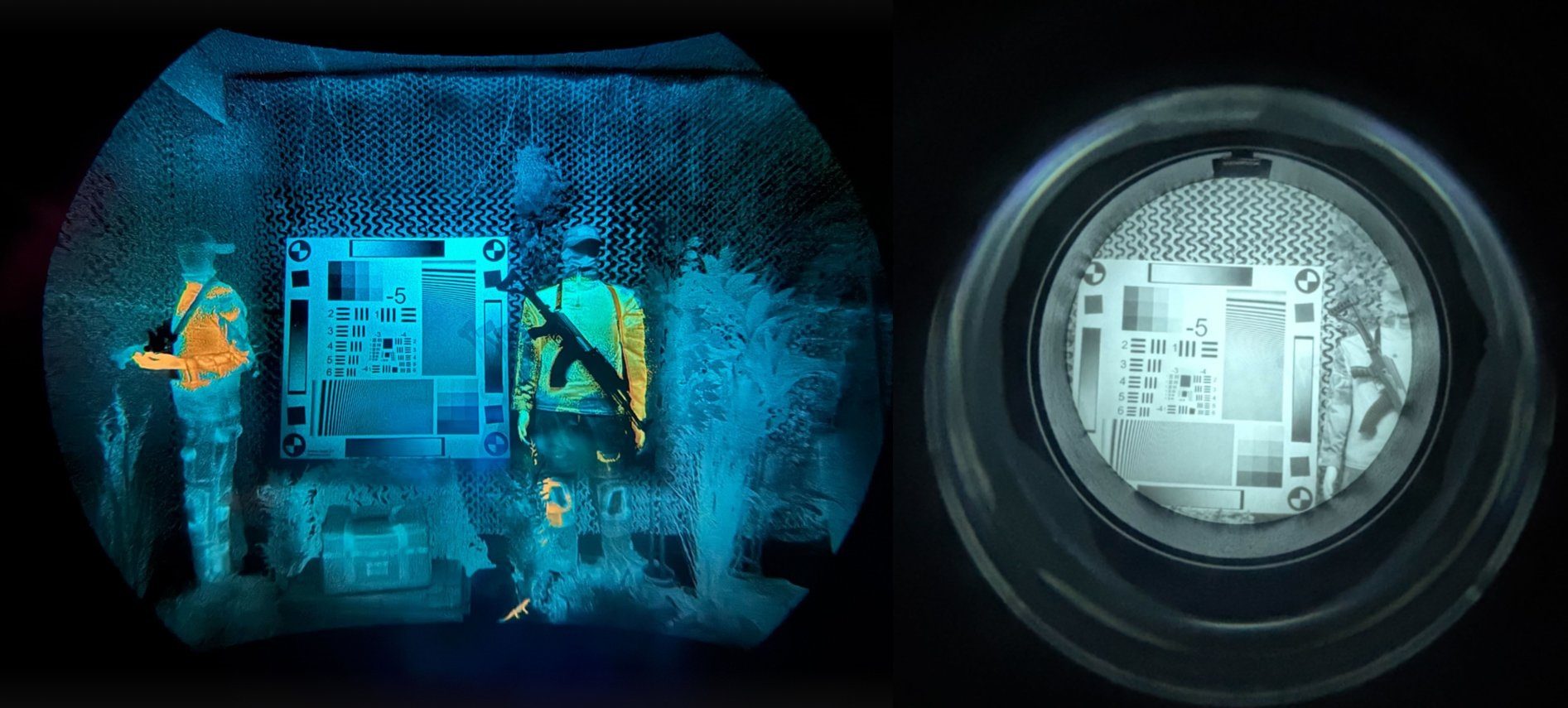

“The difference is night and day,” Luckey says in an X post. “The digital night vision of the EagleEye Family of Systems delivers an 84 degree field of view, stereo thermal fusion to expose hidden threats, and a 4K display for enhanced warfighter perception.”

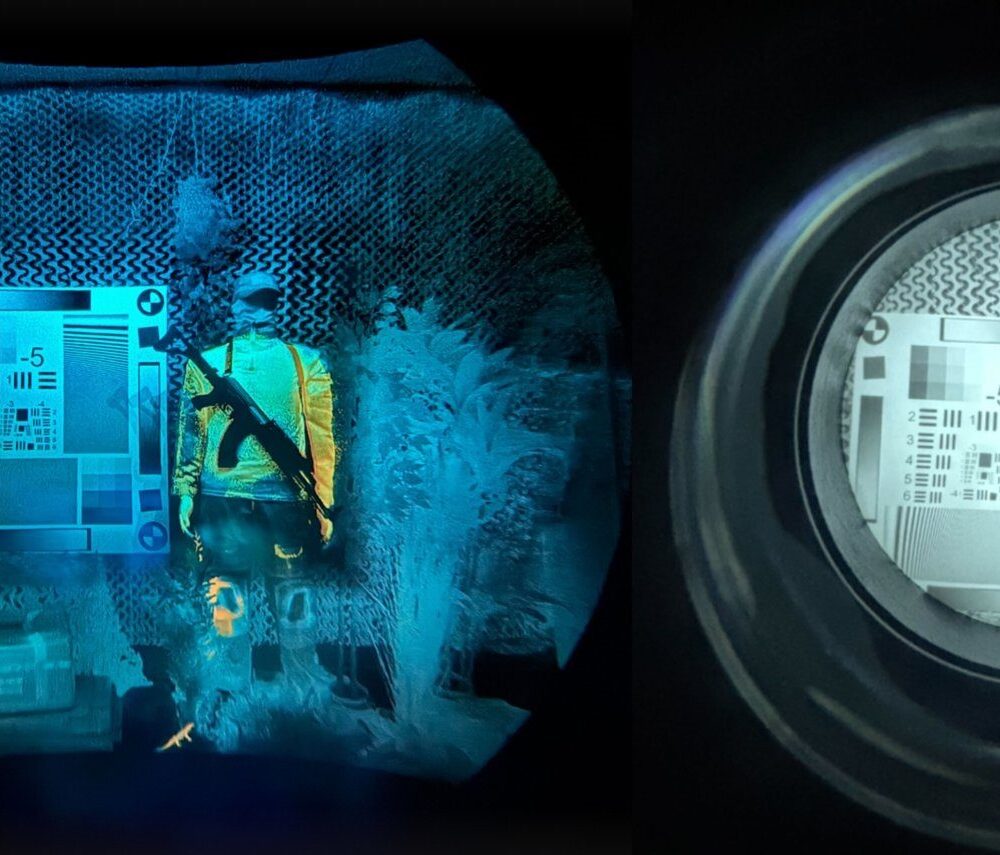

Luckey also showed off a visual comparison between EagleEye (left) and PVS-31 (right), the latter of which is a conventional binocular-style night vision system currently used in elite combat roles, such as SOCOM, Rangers, SEALs, and MARSOC.

That said, the two systems are very different—about as far from each other as a smartphone is from and a digital Casio watch.

According to Anduril, EagleEye offloads some of front-heaviness of its low light and thermal sensors by integrating them into a sensor suite connected directly to the helmet, which is then relayed to the user’s display, which is housed in a pair of AR glasses with included ballistic and laser protection.

What’s more, the system also patches into a bevy of external data streams, including real-time info sourced from the company’s AI-driven Lattice network of surveillance and defense devices.

This comes amid Anduril’s compete for a U.S. Army contract against defense company rival Rivet. Called the Soldier Borne Mission Command (SBMC), the new contract is essentially is set to revamp the previous 10-year, $22 billion Integrated Visual Augmentation System (IVAS) project originally awarded to Microsoft in 2018, which the company hoped to fulfill by adapting its HoloLens 2 AR platform for combat roles.

In February 2025, it was revealed Anduril would be taking over the older IVAS contract, which was thought to give the company a head start on competing for SBMC.

Notably, Anduril partnered with Meta in May 2025 on combat-focused XR systems, which at the time the companies said would aim to deliver “the world’s best AR and VR systems for the U.S. military.”

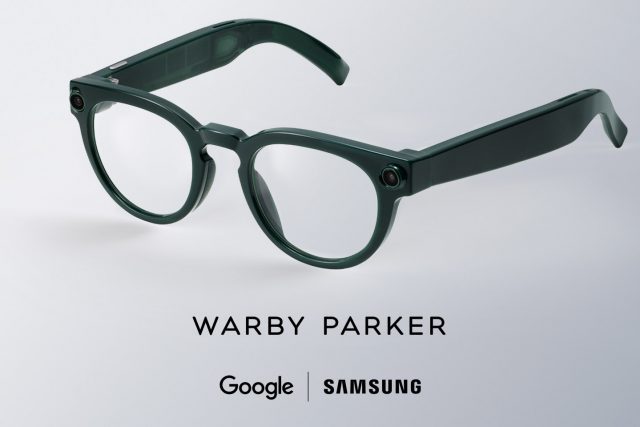

Anduril says it’s also partnered with EssilorLuxottica’s Oakley Standard Issue, Qualcomm, and Gentex, which the company says “lowers cost, accelerates development, and ensures a path for continuous innovation.”