San Francisco-based XR glasses company VITURE announced it’s secured $100 million in Series B financing, which the company says will aid in global expansion of its consumer XR glasses.

Viture initially announced in October 2024 it successfully secured a Series B, however now the company reveals its most recent tranche has brought the Series B total to $100 million, bringing overall funding to $121.5 million, according to Crunch Base data.

Previous investors include Singtel Innov8, BlueRun Ventures, BAI Capital, Verity Ventures, with the company noting that some strategic investors in the Series B “prefer to remain undisclosed at this time.”

The company says its Series B will allow it to expand its consumer XR glasses globally through retail and distribution networks, grow its enterprise offerings, and further develop its hardware and AI-powered software ecosystems.

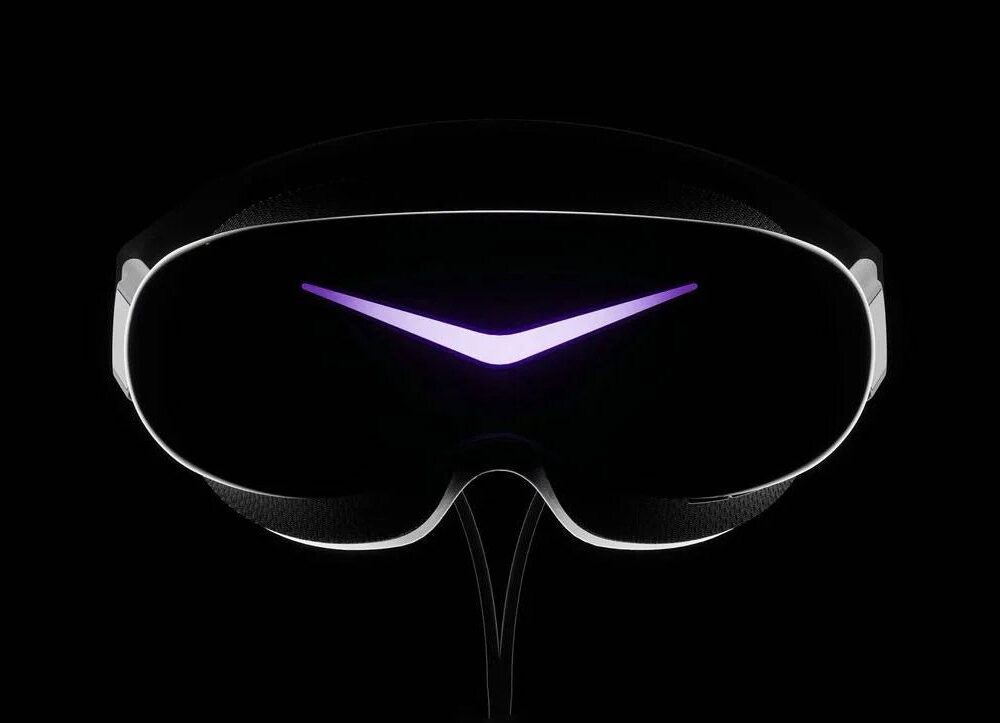

This follows the July announcement of the company’s Luma series and Beast, phone/PC-tethered XR glasses that use bird bath-style optics, which the company is targeting towards casual content consumption and productivity.

Meanwhile, the XR glasses segment is heating up, although not uniformly in the direction of the sort of casual content-focused specs that Viture is developing.

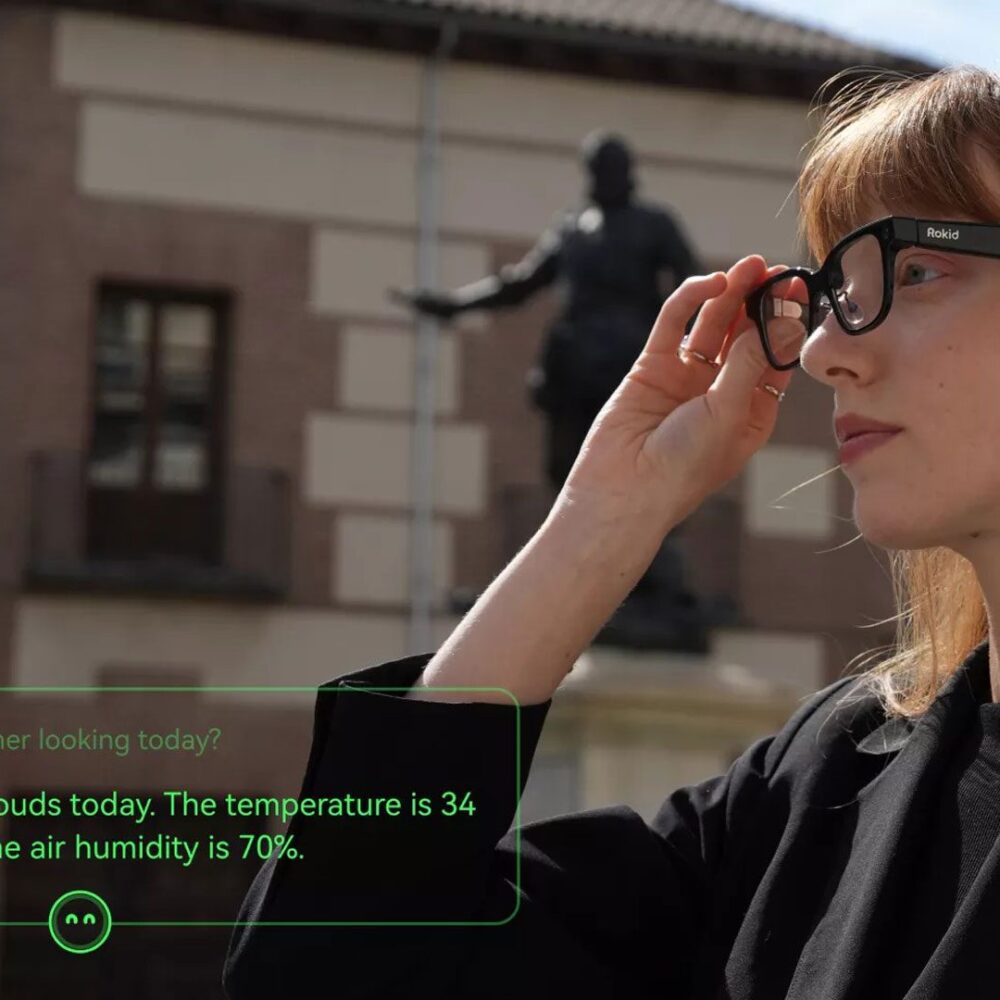

More precisely, smart glasses with heads-up displays (i.e. not augmented reality) appear to be the next hot commodity among Meta, Google, Amazon and possibly even Apple, which generally see them as stepping stones to all-day wearable AR glasses of the future.

These sorts of smart glasses are very different from Viture’s however, or full-AR glasses, like Meta’s Orion prototype; smart glasses are essentially designed to offload daily tasks from the user’s smartphone, such as notifications, turn-by-turn directions, AI queries, calls, as well as photo and video capture.

Check out this handy primer on the differences between smart glasses and AR glasses to learn more.